|

I'm trying to access S3 file from SparkSQL job. I already tried solutions from several posts but nothing seems to work. Maybe because my EC2 cluster runs the new Spark2.0 for Hadoop2.7.

I setup hadoop this way:

I build an uber-jar using sbt assembly using:

When I submit my job to the cluster, I always got the following errors:

Exception in thread 'main' org.apache.spark.SparkException: Job aborted due to stage failure: Task 0 in stage 0.0 failed 4 times, most recent failure: Lost task 0.3 in stage 0.0 (TID 6, 172.31.7.246): java.lang.RuntimeException: java.lang.ClassNotFoundException: Class org.apache.hadoop.fs.s3a.S3AFileSystem not found at org.apache.hadoop.conf.Configuration.getClass(Configuration.java:2195) at org.apache.hadoop.fs.FileSystem.getFileSystemClass(FileSystem.java:2638) at org.apache.hadoop.fs.FileSystem.createFileSystem(FileSystem.java:2651) at org.apache.hadoop.fs.FileSystem.access$200(FileSystem.java:92) at org.apache.hadoop.fs.FileSystem$Cache.getInternal(FileSystem.java:2687) at org.apache.hadoop.fs.FileSystem$Cache.get(FileSystem.java:2669) at org.apache.hadoop.fs.FileSystem.get(FileSystem.java:371) at org.apache.spark.util.Utils$.getHadoopFileSystem(Utils.scala:1726) at org.apache.spark.util.Utils$.doFetchFile(Utils.scala:662) at org.apache.spark.util.Utils$.fetchFile(Utils.scala:446) at org.apache.spark.executor.Executor$$anonfun$org$apache$spark$executor$Executor$$updateDependencies$3.apply(Executor.scala:476)

It seems that the driver is able to read from S3 without problem but not the workers/executors.. I do not understand why my uberjar is not sufficient.

However, I tried as well without success to configure spark-submit using:

--packages com.amazonaws:aws-java-sdk:1.7.4,org.apache.hadoop:hadoop-aws:2.7.3

PS: If I switch to s3n protocol, I got the following exception:

java.io.IOException: No FileSystem for scheme: s3n

Yuval Itzchakov

119k2626 gold badges182182 silver badges250250 bronze badges

elldekaaelldekaa

2 Answers

Actually all operations of spark working on workers. and you set these configuration on master so once you can try to app configuration of s3 on mapPartition{}

Sandeep PurohitSandeep Purohit

If you want to use

s3n:

Now, regarding the exception, you need to make sure both JARs are on the driver and worker classpaths, and make sure to distribute them to the worker node if you're using Client Mode via the

--jars flag:

Also, if you're building your uber JAR and including Yuval ItzchakovYuval Itzchakov

aws-java-sdk and hadoop-aws, no reason to use the --packages flag.

119k2626 gold badges182182 silver badges250250 bronze badges

Not the answer you're looking for? Browse other questions tagged hadoopapache-sparkamazon-s3 or ask your own question.

When using cluster deploy mode, the classpath of the SparkSubmit process that gets launched only includes the Spark assembly and not spark.driver.extraClassPath. This is of course by design, since the driver actually runs on the cluster and not inside the SparkSubmit process.

Descargar Nero para PC gratis - Para grabar CD y DVD, uno de los mejores. Download Nero for Windows 7. Free and safe download. Download the latest version of the top software, games, programs and apps in 2019. Dec 21, 2018 - It has been a long time since Nero's first version was presented in 1997, and even though the optical drive has gradually succumbed, there are.

However, if the SparkSubmit process, minimal as it may be, needs any extra libraries that are not part of the Spark assembly, there is no good way to include them. (I say 'no good way' because including them in the SPARK_CLASSPATH environment variable does cause the SparkSubmit process to include them, but this is not acceptable because this environment variable has long been deprecated, and it prevents the use of spark.driver.extraClassPath.)

An example of when this matters is on Amazon EMR when using an S3 path for the application JAR and running in yarn-cluster mode. The SparkSubmit process needs the EmrFileSystem implementation and its dependencies in the classpath in order to download the application JAR from S3, so it fails with a ClassNotFoundException. (EMR currently gets around this by setting SPARK_CLASSPATH, but as mentioned above this is less than ideal.)

I have tried modifying SparkSubmitCommandBuilder to include the driver extra classpath whether it's client mode or cluster mode, and this seems to work, but I don't know if there is any downside to this.

Example that fails on emr-4.0.0 (if you switch to setting spark.(driver,executor).extraClassPath instead of SPARK_CLASSPATH): spark-submit --deploy-mode cluster --class org.apache.spark.examples.JavaWordCount s3://my-bucket/spark-examples.jar s3://my-bucket/word-count-input.txt

Resulting Exception:

Exception in thread 'main' java.lang.RuntimeException: java.lang.ClassNotFoundException: Class com.amazon.ws.emr.hadoop.fs.EmrFileSystem not found at org.apache.hadoop.conf.Configuration.getClass(Configuration.java:2074) at org.apache.hadoop.fs.FileSystem.getFileSystemClass(FileSystem.java:2626) at org.apache.hadoop.fs.FileSystem.createFileSystem(FileSystem.java:2639) at org.apache.hadoop.fs.FileSystem.access$200(FileSystem.java:90) at org.apache.hadoop.fs.FileSystem$Cache.getInternal(FileSystem.java:2678) at org.apache.hadoop.fs.FileSystem$Cache.get(FileSystem.java:2660) at org.apache.hadoop.fs.FileSystem.get(FileSystem.java:374) at org.apache.hadoop.fs.Path.getFileSystem(Path.java:296) at org.apache.spark.deploy.yarn.Client.copyFileToRemote(Client.scala:233) at org.apache.spark.deploy.yarn.Client.org$apache$spark$deploy$yarn$Client$$distribute$1(Client.scala:327) at org.apache.spark.deploy.yarn.Client$$anonfun$prepareLocalResources$5.apply(Client.scala:366) at org.apache.spark.deploy.yarn.Client$$anonfun$prepareLocalResources$5.apply(Client.scala:364) at scala.collection.immutable.List.foreach(List.scala:318) at org.apache.spark.deploy.yarn.Client.prepareLocalResources(Client.scala:364) at org.apache.spark.deploy.yarn.Client.createContainerLaunchContext(Client.scala:629) at org.apache.spark.deploy.yarn.Client.submitApplication(Client.scala:119) at org.apache.spark.deploy.yarn.Client.run(Client.scala:907) at org.apache.spark.deploy.yarn.Client$.main(Client.scala:966) at org.apache.spark.deploy.yarn.Client.main(Client.scala) at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:606) at org.apache.spark.deploy.SparkSubmit$.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:672) at org.apache.spark.deploy.SparkSubmit$.doRunMain$1(SparkSubmit.scala:180) at org.apache.spark.deploy.SparkSubmit$.submit(SparkSubmit.scala:205) at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:120) at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala) Caused by: java.lang.ClassNotFoundException: Class com.amazon.ws.emr.hadoop.fs.EmrFileSystem not found at org.apache.hadoop.conf.Configuration.getClassByName(Configuration.java:1980) at org.apache.hadoop.conf.Configuration.getClass(Configuration.java:2072) .. 27 more Attachments

The spark programming-guide explain that Spark can create distributed datasets on Amazon S3 .

But since the pre-buid 'Hadoop 2.6' the S3 access doesn't work with s3n or s3a.

sc.hadoopConfiguration.set('fs.s3a.awsAccessKeyId', 'XXXZZZHHH')

sc.hadoopConfiguration.set('fs.s3a.awsSecretAccessKey', 'xxxxxxxxxxxxxxxxxxxxxxxxxxx') val lines=sc.textFile('s3a://poc-XXX/access/2016/02/20160201202001_xxx.log.gz')

java.lang.RuntimeException: java.lang.ClassNotFoundException: Class org.apache.hadoop.fs.s3a.S3AFileSystem not found

Any version of spark : spark-1.3.1 ; spark-1.6.1 even spark-2.0.0 with hadoop.7.2 .

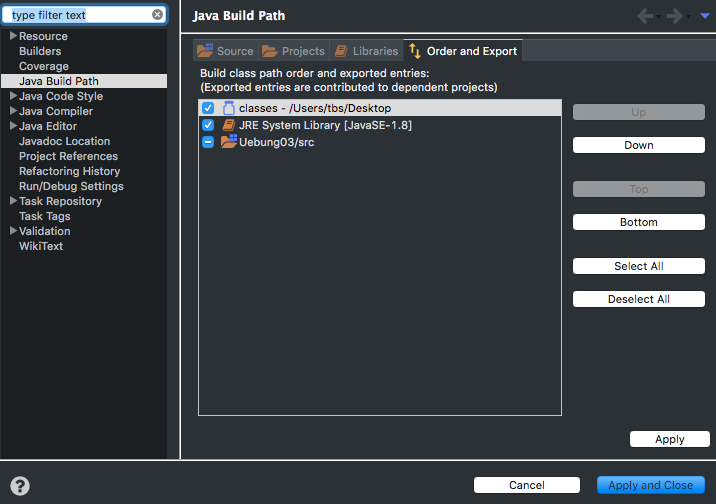

I understand this is an Hadoop Issue ( 'hadoop-aws-x.x.x.jar and aws-java-sdk-x.x.x.jar is enough ? What env variable we need to set and what file we need to modifiy . Is it '$CLASSPATH 'or a variable in 'spark-defaults.conf' with variable 'spark.driver.extraClassPath' and 'spark.executor.extraClassPath'

But Still Works with spark-1.6.1 pre build with hadoop2.4

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

RSS Feed

RSS Feed